Source that deep learning with mnist data

MNIST reads the data one-hot to create tansorboard data and stores the training results.

%autosave 0

def reset_graph(seed=42):

tf.reset_default_graph()

tf.set_random_seed(seed)

np.random.seed(seed)

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets("/tmp/data/", one_hot=True)

import tensorflow as tf

import numpy as np

n_inputs = 28*28 # MNIST

n_hidden1 = 300

n_hidden2 = 100

n_hidden3 = 150

n_outputs = 10

logs_path = 'tensorboard_logs'

reset_graph()

X = tf.placeholder(tf.float32, shape=(None, n_inputs), name="X")

y = tf.placeholder(tf.int64, shape=(None, n_outputs), name="y")

with tf.name_scope("dnn"):

hidden1 = tf.layers.dense(inputs=X, units=n_hidden1, activation=tf.nn.relu)

hidden2 = tf.layers.dense(inputs=hidden1, units=n_hidden2, activation=tf.nn.relu)

hidden3 = tf.layers.dense(inputs=hidden2, units=n_hidden3, activation=tf.nn.relu)

logits = tf.layers.dense(inputs=hidden2, units=n_outputs)

with tf.name_scope("loss"):

xentropy = tf.nn.softmax_cross_entropy_with_logits(labels=y, logits=logits)

loss = tf.reduce_mean(xentropy, name="loss")

learning_rate = 0.01

with tf.name_scope("train"):

optimizer = tf.train.GradientDescentOptimizer(learning_rate)

training_op = optimizer.minimize(loss)

with tf.name_scope("eval"):

correct = tf.nn.in_top_k(logits, tf.argmax(y,1), 1)

accuracy = tf.reduce_mean(tf.cast(correct, tf.float32))

init = tf.global_variables_initializer()

saver = tf.train.Saver()

n_epochs = 40

batch_size = 50

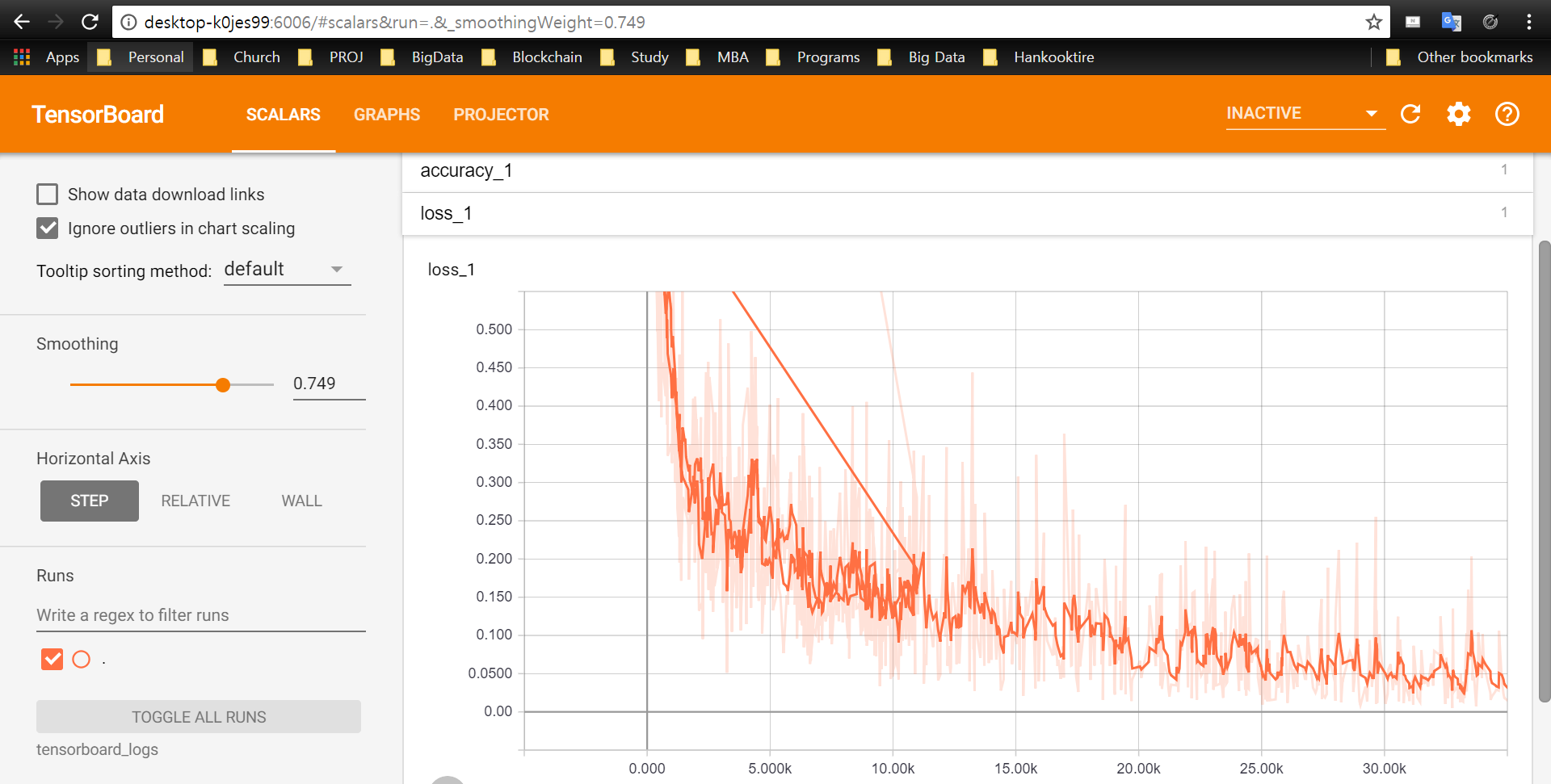

tf.summary.scalar("loss", loss)

tf.summary.scalar("accuracy", accuracy)

merged_summary_op = tf.summary.merge_all()

with tf.Session() as sess:

init.run()

count = 0

summary_writer = tf.summary.FileWriter(logs_path, graph=tf.get_default_graph())

for epoch in range(n_epochs):

for iteration in range(mnist.train.num_examples // batch_size):

X_batch, y_batch = mnist.train.next_batch(batch_size)

_, summary = sess.run([training_op, merged_summary_op], feed_dict={X: X_batch, y: y_batch})

count += 1

summary_writer.add_summary(summary, global_step=count)

acc_train = accuracy.eval(feed_dict={X: X_batch, y: y_batch})

acc_test = accuracy.eval(feed_dict={X: mnist.test.images, y: mnist.test.labels})

print(epoch, "Train accuracy:", acc_train, "Test accuracy:", acc_test)

tf.add_to_collection("X", X)

tf.add_to_collection("logits", logits)

save_path = saver.save(sess, "./my_model_final.ckpt")

Reads the trained model and produces results.

import tensorflow as tf

import numpy as np

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets("/tmp/data/", one_hot=True)

tf.reset_default_graph()

init = tf.global_variables_initializer()

with tf.Session() as sess:

sess.run(init)

saver = tf.train.import_meta_graph("./my_model_final.ckpt.meta")

saver.restore(sess, "./my_model_final.ckpt")

logits = tf.get_collection("logits")[0]

X = tf.get_collection("X")[0]

X_new_scaled = mnist.test.images[:20]

Z = logits.eval(feed_dict={X: X_new_scaled})

y_pred = np.argmax(Z, axis=1)

print("Predicted classes:", y_pred)

print("Actual classes: ", mnist.test.labels[:20])